Adjusting the Seat

Introduction

To kick this off, think about when tools like Google Drive and Dropbox enabled real-time document collaboration. It was a massive productivity boost. No more emailing attachments like proposal_v7_FINAL_really_final.docx back and forth or tracking versions through filenames.

Companies adjusted, workflows became ergonomic, and collaboration became mostly frictionless.

That model still exists today — but something changed quietly.

We’re still collaborating in shared documents.

We’re just no longer doing the thinking inside them.

The new workflow nobody talks about

On the surface, the process today looks identical. Most companies — even highly technical ones — still work primarily inside shared docs, leaving comments and reaching consensus over collaboration.

But the direction of reasoning changed.

Previously:

Person → Document → Person

Now:

Person → AI → Document → Person → AI → Document

The document remained the meeting place.

The thinking moved outside of it.

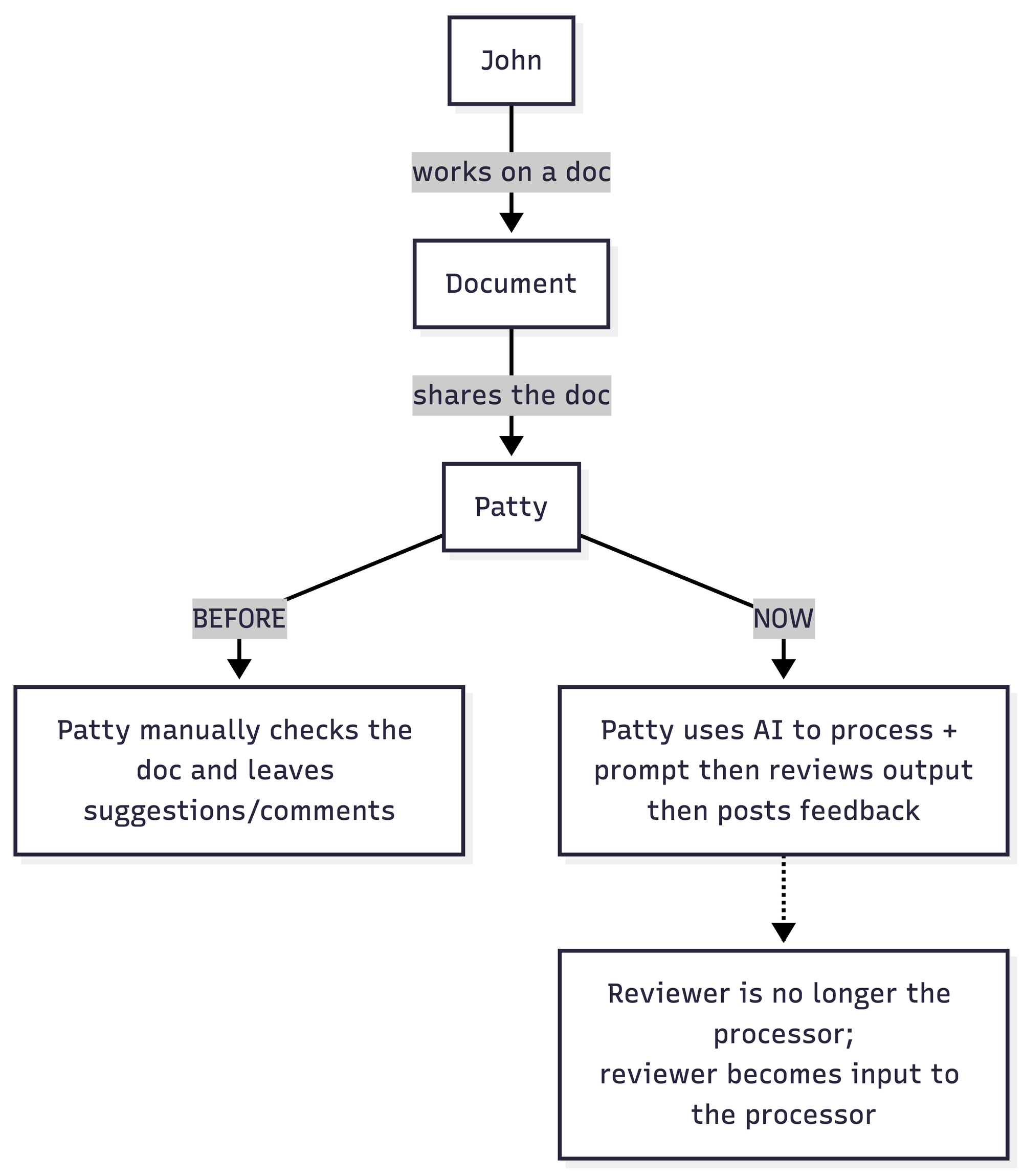

What actually happens now

A typical review cycle looks like this:

- A document is written

- It gets shared for review

- The reviewer copies it into AI

- The reviewer provides perspective through a prompt

- AI processes the document

- The result returns into the shared document as feedback

Before AI, the reviewer was the processor.

Now the reviewer is the input to the processor.

The human became a translation layer between organization and machine reasoning. We could essentially call the new workflow: “Prompt-mediated collaboration”

Before vs Now — where reasoning lives

Before:

- Reviewer reads document

- Reviewer interprets it

- Reviewer writes suggestions

Now:

- Reviewer reads document

- Reviewer instructs AI how to interpret it

- AI writes suggestions

- Reviewer approves the interpretation

The person still signs the comment — but the reasoning path is different.

The collaboration surface stayed the same. The cognitive process relocated.

The fork that nobody sees

Right now that the process is clear, its still worthy of mentioning one specific point. When the user pastes the main doc into their own AI processing, they will follow up with a prompt. Meaning, this is where they actually shape the outcome of the document with their "opinion on the topic" - to make it more concrete, each department working on the document will emphasise their own angle in the prompt - essentially forking the document copy into "department first objectives" coms. It is also highly plausible that when departments change opinions like this, that the last department that synced with the help of AI actually dramatically affected the final outcome - AI prompts feed from sources supplied by the company department employees in their own individual context.

| Role | What they tell the AI to care about |

|---|---|

| Marketing | Emphasize value & narrative |

| Engineering | Preserve correctness |

| Product | Improve clarity |

| Legal | Reduce risk |

| Finance | Justify cost |

So instead of one document being reviewed by multiple perspectives,

multiple AI interpretations of the document are merged into one place.

This creates a hidden fork.

Not a version fork — an interpretation fork.

Cross department interpretation drift

Traditional collaboration conflict:

People disagree on edits.

AI collaboration conflict:

People agree on edits but disagree on meaning. Because each reviewer processed a different internal version generated by different instructions. So the merge conflict is invisible.

Because AI outputs overwrite context more strongly than human edits.

In old collaboration:

- humans merge ideas

In AI collaboration:

- outputs replace ideas

This creates algorithmic authority drift — whoever prompts last becomes the de-facto decision maker. That’s a real emerging management issue.

This is not version control failure. This is interpretation drift.

Where ergonomics breaks

Document tools were designed around a simple assumption:

Everyone reading the document interprets the same text.

AI breaks that assumption. Now everyone reads the same text but through different reasoning engines configured privately/individually. So teams start debating outcomes instead of intentions.

Meetings suddenly feel strange:

- Everyone reviewed it.

- Everyone is confident.

- Nobody aligned.

They didn’t process the same document. They processed different machine interpretations.

So the real problem object is:

context accumulation

AI output depends on:

- prompt

- hidden assumptions

- past conversations

- personal model usage habits

- temperature / system prompt

- tool memory

- embeddings

This is the core of the problem - each company department, each worker/individual has their own accumulated context which also feeds from their past conversations. The context may even include certain bias or incorrect data if individuals are not preforming regular context updates, essentially fragmenting source of truth to the individual level.

At the same time, understanding the context accumulation allows us to utilise it, by performing a perspective shift and starting treating context accumulation as a company wide investment, a well maintained library of cross department truths and variables that AI can utilise for every single prompt output.

Adjusting the seat — Prompt design first

This process pretty much tells us the key is in strong fundamental context and prompt design. Instead of breaking down into context and prompt siloses by individuals, we have much higher chance for faster, more accruate (company culture wise) outputs by utilising prompt design libraries with a shared well maintained context libraries.

If reasoning happens inside AI, collaboration must align before processing, not after editing.

Instead of:

People individually process → then reconcile

We move to:

People align perspective → then process

The task stops being document editing and becomes shared interpretation design.

The prompt becomes the new draft.

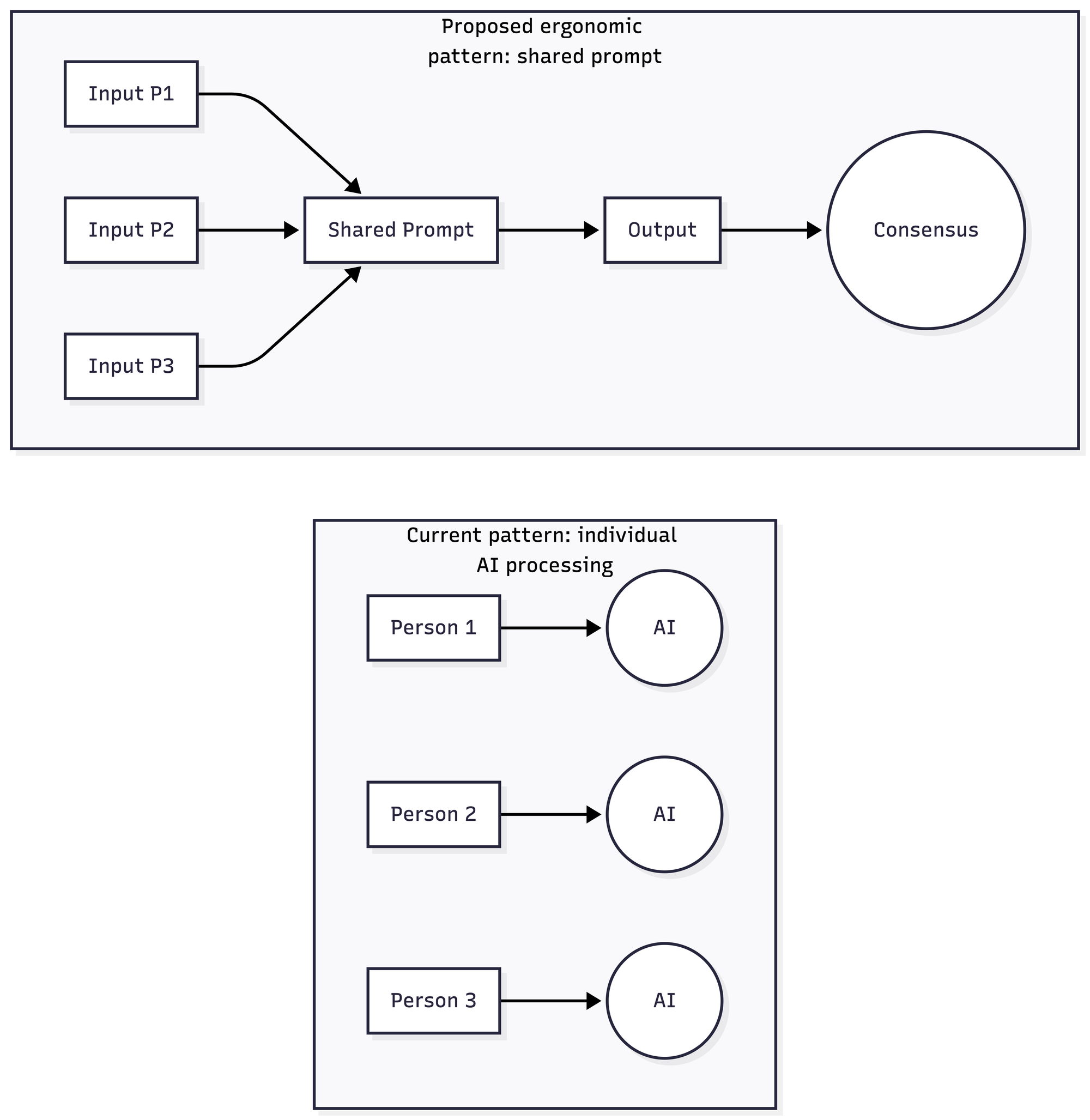

Two models of work

Current model (individual processing)

Each participant runs their own AI pass and merges outputs afterward.

Result: consensus attempts after divergence.

Ergonomic model (shared processing)

Participants align interpretation inputs first, then generate output.

Result: consensus happens before divergence.

Not more meetings.

Less re-interpretation.

What changes operationally

Organizations already maintain knowledge about themselves:

- brand tone

- product truth

- legal boundaries

- positioning

- constraints

Right now employees manually re-explain this to AI every time.

Instead, this becomes a maintained baseline used before any processing.

So employees stop explaining the company to AI

and start explaining only the task.

What we achieved

The AI stops improvising company identity per person. Departments no longer overwrite each other indirectly. They influence the same reasoning layer.Developers generate code aware of communication goals. Marketing generates copy aware of technical limits.

Not equal influence — contextual influence.

The shift

Document collaboration solved version control of text. AI introduces version control of interpretation. Today we manually reconcile interpretations after generation. Work process ergonomics suggests aligning interpretation before generation.

Another seat adjustment. The document is still where decisions appear.

But alignment now happens one layer earlier - where the reasoning begins.