The Verifiable privacy excercise

A concept for verifiable AI moderation built on Oasis Network

Imagine you are building a messaging app with true end-to-end encryption. No compromises. Bulletproof. You want every message to live inside a Trusted Execution Environment (TEE), where no third party — not even you — can ever read the content.

The problem arrives the moment you try to publish it.

Both Apple's App Store and Google Play require that apps handling user-generated content have mechanisms to prevent abuse. Google's 2025 policy update made this explicit: user-generated content must be filterable for harmful material. This puts every privacy-focused messaging app in the same uncomfortable position — find the lowest acceptable compromise between privacy and control, and hope users trust the marketing.

Signal is the most honest example of how this plays out. They don't carve out exceptions in their encryption — the E2E is genuine. But what they cannot offer is any form of verifiable moderation: no way for regulators, auditors, or users to confirm that the moderation is as expected. Their terms of service prohibit illegal use, and when served with court orders they can confirm an account exists and its last login — that's essentially all they can provide. The encryption is real. The verifiability gap is too.

That gap is what this concept addresses.

Step 1 — Encryption Without Exceptions

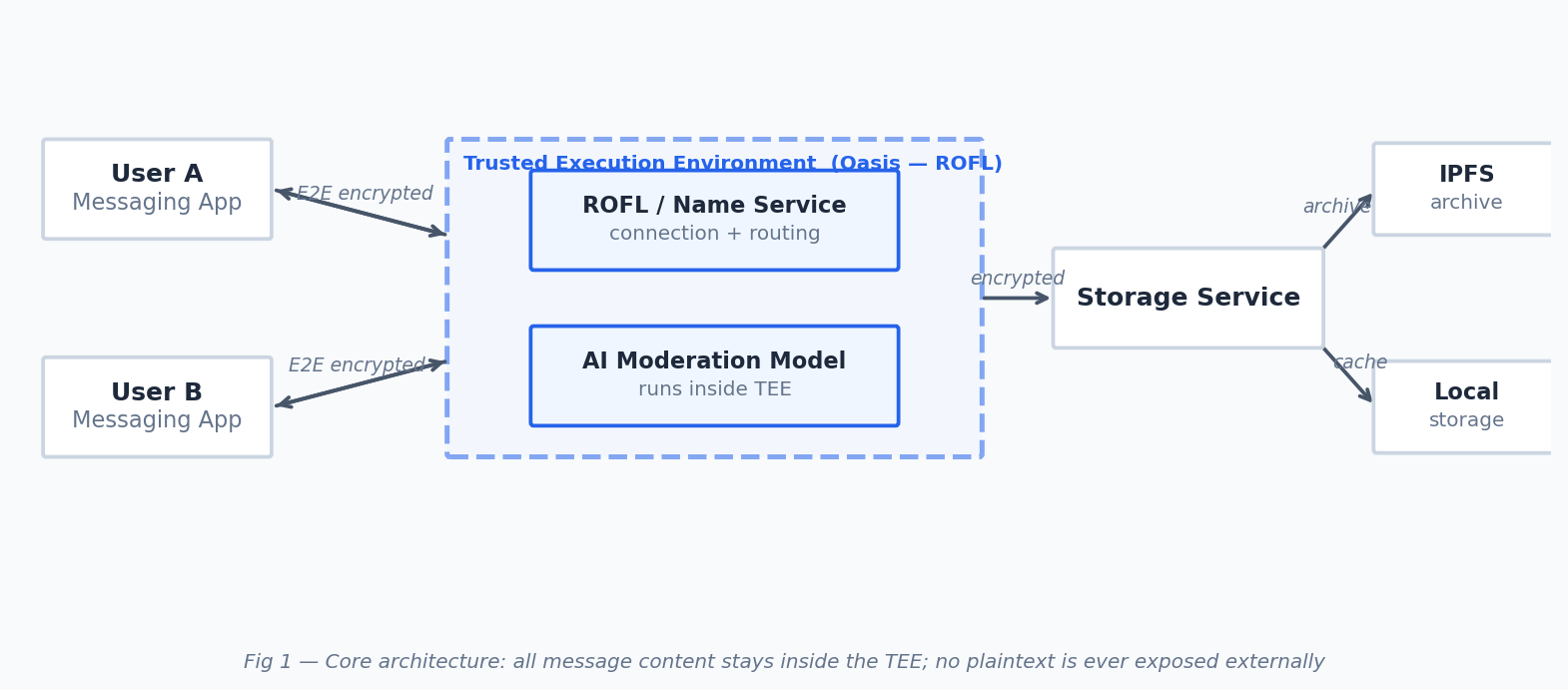

The baseline architecture is straightforward. Users connect through a TEE running on Oasis Network's ROFL framework, exchange messages, and the content never leaves the secure enclave unencrypted. A storage service handles persistence — encrypted blobs cached locally (on the device) or archived to IPFS.

Nothing novel here yet. The TEE handles routing and key management; messages flow end-to-end encrypted between users. No server, no operator, nobody sees the plaintext. This is the starting point, not the destination.

The immediate question is: how do you moderate a network where nobody can see the content?

Step 2 — Running AI Inside the Box

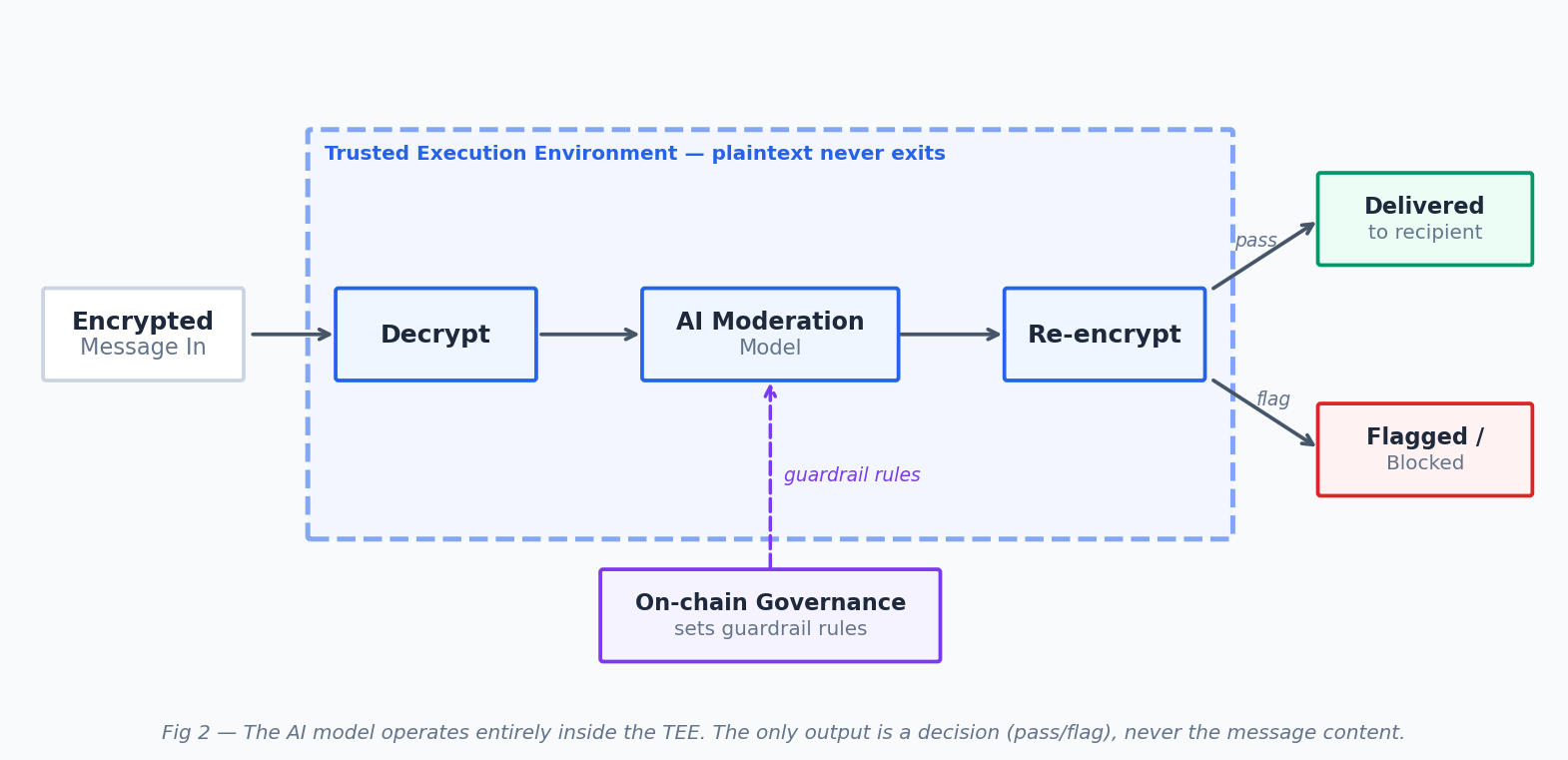

The conventional answer requires decrypting messages, sending them to an external AI service, and trusting that third party with the plaintext. That breaks the encryption guarantee entirely.

The TEE-native answer: run the AI model inside the enclave itself. The message is decrypted, assessed, and re-encrypted entirely within the TEE. The only thing that exits is a decision — pass or flag — never the content.

This is already verifiable in a mathematical sense. The model running inside the TEE can be cryptographically attested — anyone can follow the attestation report and confirm that the specific model and guardrail code is exactly what's running. The logic is open. The deployment is provable.

So you go to the App Store. You show TEE-based AI moderation. You disclose the model, the guardrails, the filter logic. You provide the attestation path.

The regulator still cannot trust it because neither the provider nor the regulator actually know, what's happening inside the black box.

Step 3 — Making Behaviour Verifiable, Not Just the Code

Mathematical proof is not the same as human confidence. Attestation proves what is running. It doesn't show how it thinks.

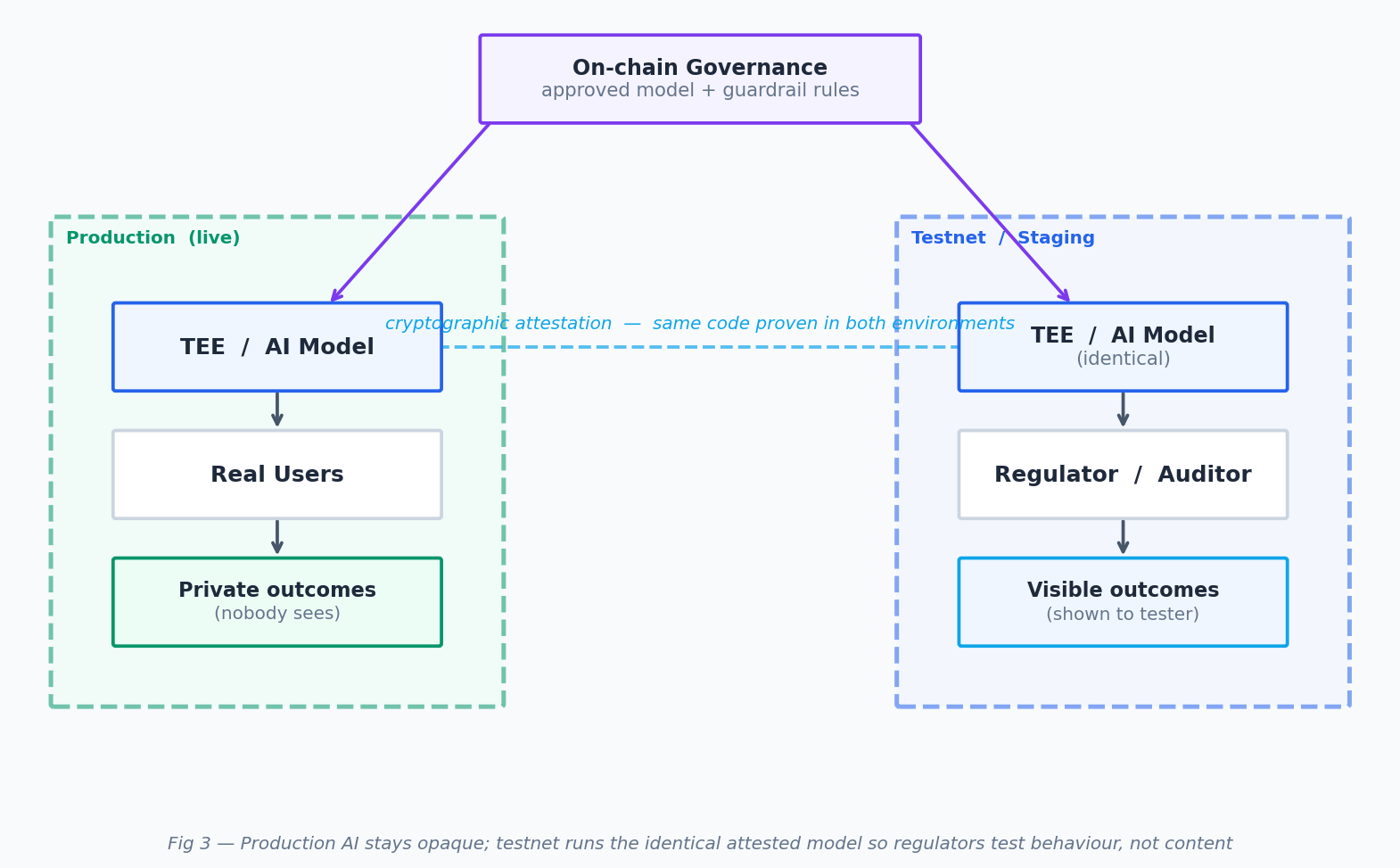

The potential solution is a parallel testnet running an identical, cryptographically-pegged version of the production AI. Not a sandbox or a demo — a live mirror, attested to the same governance-approved code.

Now, instead of asking a regulator to "play with it for five minutes," you offer them a service. They bring their own datasets — content they know should be flagged, edge cases, adversarial inputs — and they run it through the testnet model. The production AI stays dark. The testnet reveals how the model behaves.

The truth isn't disclosed in the content. It's disclosed in the behaviour.

This is the shift from mathematically verifiable to behaviourally verifiable. Regulators, app stores, or any third party can probe the testnet model without ever touching real user data. Because the two environments are cryptographically attested to run the same code, every testnet result is a verified proxy for production behaviour.

Step 4 — Governance Over the Guardrails

There's still a question of authority: who decides what the AI flags?

In traditional platforms, it's a product team behind closed doors. In a regulated context, it becomes negotiation with governments. Neither unfrotunately fits a web3-native product.

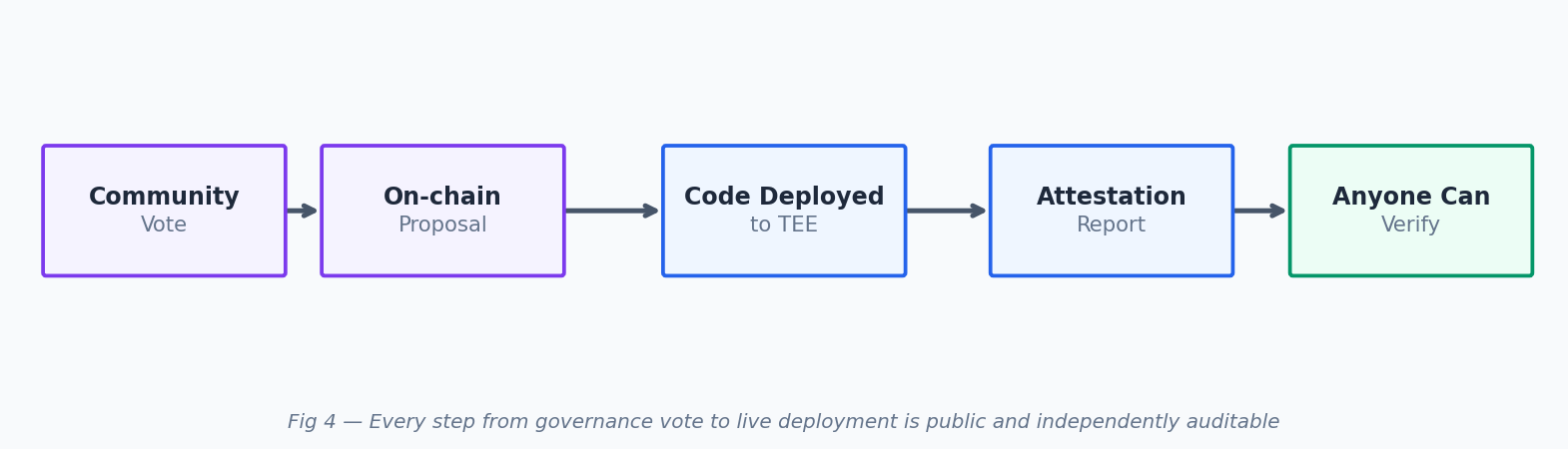

The answer is on-chain governance. The core moderation rules — guardrails, thresholds, the content categories the AI acts on — are controlled by community votes. Any change to the model's behaviour requires governance consensus, and because the deployed model is cryptographically attested, the governance-approved version is the only version that can run.

This reframes the relationship with regulators entirely. Rather than trusting a company's promise, regulators can become governance participants — not operators, not backdoor holders, but voters in a transparent system with public proposals and on-chain records. Apple and Google could participate in the same way. The AI acts on what governance approves. Governance is public. The chain of verifiability is complete.

Step 5 — Sentinel Agents: Verifiability That Never Sleeps

One problem remains: AI drift. A model that performed correctly at audit time may not behave identically a year later, even with the same weights, as the input distribution shifts over time. Attestation proves the code is right. It doesn't prove the live model is still behaving right.

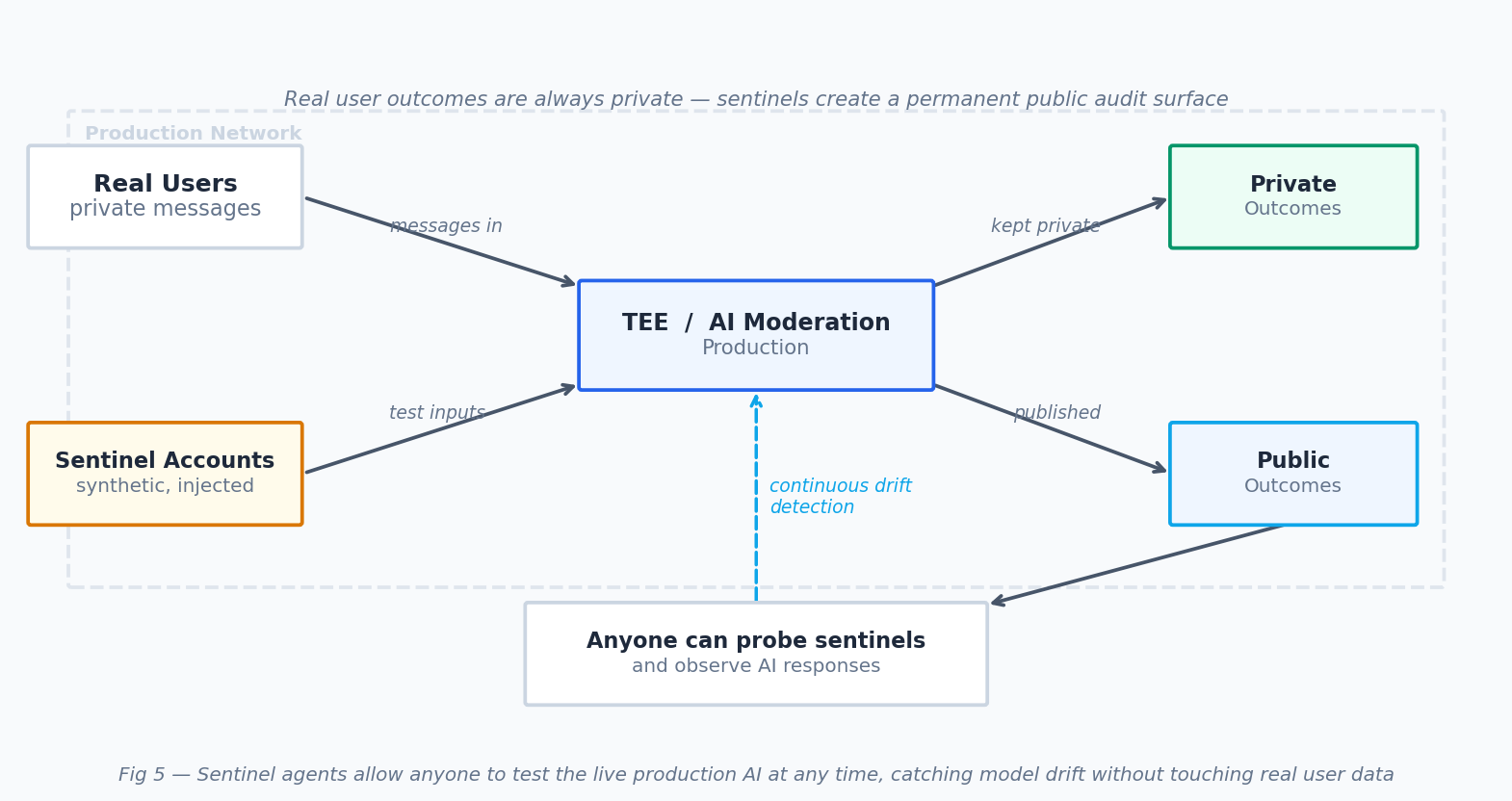

The answer is sentinel agents — synthetic accounts injected into the production network, processed by the same production AI as real users, with their outcomes made public.

Anyone can push known-bad content through a sentinel and observe whether it gets flagged. Anyone can push benign content and confirm it passes. This creates a continuous, crowdsourced stress test of the live model — not a snapshot audit, but an ongoing public signal. Real user privacy is untouched. The sentinel surface gives everyone a live window into production AI behaviour at any time.

What Verifiable Privacy Actually Looks Like

Strip it back and the product is simple: an AI compliance service where encrypted data enters, is assessed against governance-approved rules, and exits. The assessment is private. The rules are public. The model is cryptographically proven. The behaviour is continuously tested.

Put together, you get something that hasn't existed before:

- Governance vote → rules are public and community-controlled

- Code deployed to TEE → attested and auditable

- Testnet mirror → behaviour is provable without exposing content

- Sentinel agents → live verification, ongoing, open to anyone

The messaging app is one use case. The architecture underneath is a general-purpose primitive — verifiable AI moderation for any system that needs to prove it is moderating, without proving what it is moderating.

This is what verifiable privacy looks like in practice: not a promise, not a marketing claim, but a system where the truth is structurally visible without the content ever being exposed.

Oasis makes any application private and verifiable, no matter where it runs. Build what couldn't exist before.

Built on Oasis Network · Governed on-chain · Verified in public

References

- Google's 2025 Android app policy update — WebProNews

- Signal privacy review — Mozilla Foundation

- Signal terms of service — signal.org

- Signal security analysis — Safety Detectives

- Oasis Network TEE technology — oasisprotocol.org